The Real-Time Advantage: Redefining the Enterprise AI Voice OS

In the rapidly evolving landscape of conversational artificial intelligence, the distinction between a rudimentary AI voice agent and a comprehensive enterprise AI Voice OS is profound. While basic voice agents rely on static scripts, delayed API calls, and pre-programmed dialogue trees, an enterprise-grade AI Voice OS operates as a dynamic, context-aware ecosystem. The most critical differentiator in this architecture is the capability for real-time CRM data integration. For enterprises, the ability to synthesize, retrieve, and act upon live customer data during an active voice interaction is not merely an operational upgrade; it is a strategic imperative that directly dictates commercial success.

Many top-ranking platforms superficially cover voice AI features, presenting generic 'best of' lists that fail to address the complex architectural realities of enterprise deployment. They treat CRM integration as an afterthought—a simple webhook or an asynchronous batch update. True real-time CRM data integration requires a robust orchestration layer that handles immediate data synchronization, rigorous latency budgets, and stringent data governance. This definitive guide serves as a technical primer and migration blueprint for software architects, technical evaluators, and enterprise decision-makers. It thoroughly explores the architectural considerations for real-time data flow, the deployment of Alchemyst's Kathan engine, and the structured ROI frameworks necessary to justify enterprise AI Voice OS adoption.

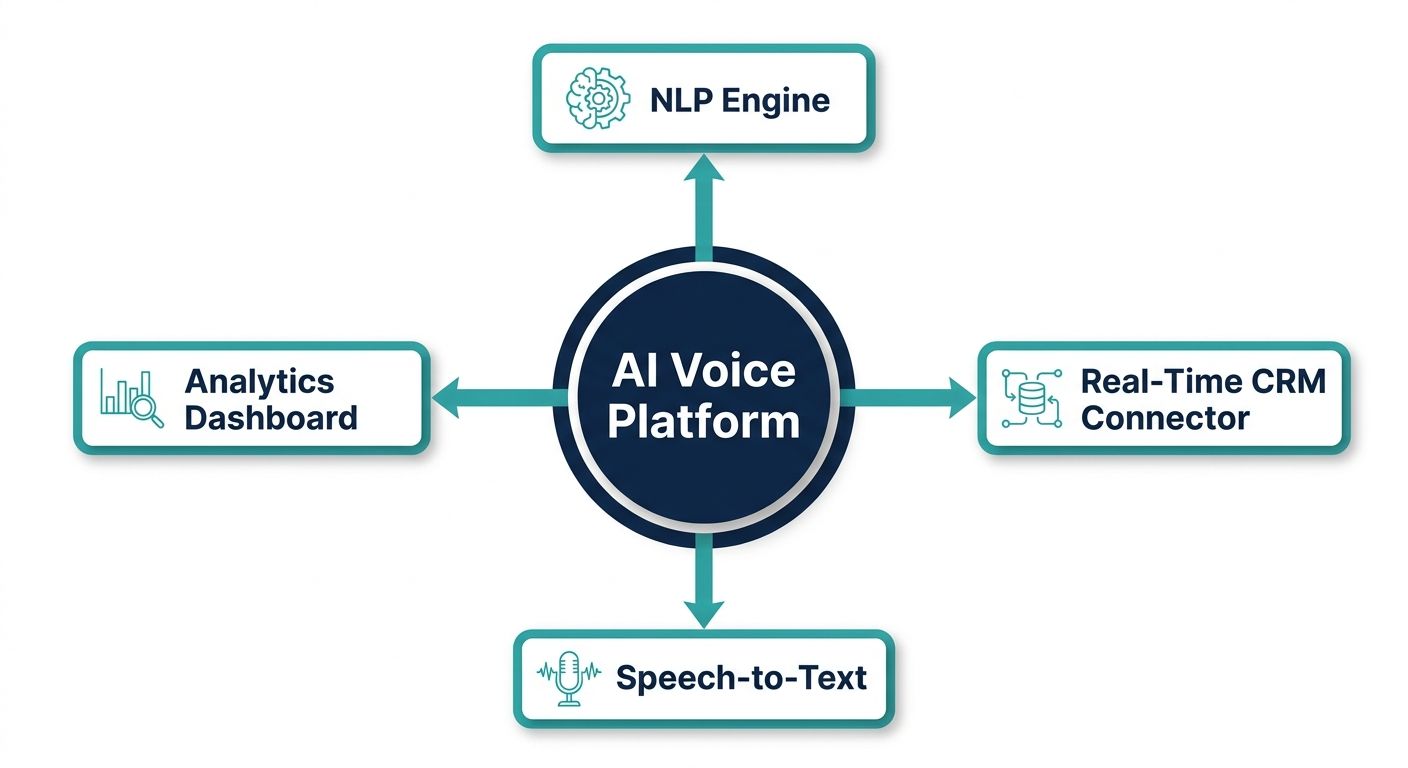

The Anatomy of an Enterprise AI Voice Platform

To understand the mechanics of an AI voice OS with real-time CRM data integration enterprise capabilities, one must move beyond the superficial paradigm of 'prompt engineering.' In highly complex enterprise environments, prompt engineering is insufficient. Instead, the focus must shift to context engineering. Context engineering involves the systematic retrieval, filtering, and structuring of relevant enterprise data before the Large Language Model (LLM) ever generates a response. This is where the core competitive advantage of an advanced AI Voice OS lies.

At the heart of this operational superiority is Alchemyst's Kathan engine. The Kathan engine utilizes a highly sophisticated computational process known as Context Arithmetic. Rather than blindly feeding an LLM vast, unstructured database dumps, Context Arithmetic treats data retrieval as a set-algebraic pipeline. It systematically determines the exact, most relevant pieces of information required for the voice agent to respond accurately to the user's specific intent, factoring in their real-time CRM profile.

The Five-Stage Pipeline for Context Determination

Integrating real-time CRM data into a voice interaction within milliseconds requires a highly optimized architectural pipeline. The Kathan engine executes context determination through five distinct stages to ensure the voice agent is both intelligent and exceptionally fast:

- Stage 1: Semantic Similarity Search: The system ingests the user's spoken utterance, transcribes it via ultra-low latency ASR (Automatic Speech Recognition), and converts the intent into vector embeddings. It then performs a high-speed semantic similarity search against the enterprise's vector database, locating historical CRM context, past interaction transcripts, and relevant knowledge base articles.

- Stage 2: Metadata Filtering: Raw semantic similarity is prone to returning outdated or permission-restricted data. The pipeline applies rigorous metadata filtering synchronized directly with the live CRM. If a user's subscription tier just changed five seconds ago, the metadata filter instantaneously restricts or grants access to corresponding context, ensuring the AI agent only operates on the latest source of truth.

- Stage 3: Deduplication: Real-time CRM integrations often pull overlapping data from multiple endpoints (e.g., Salesforce, Zendesk, and internal ERPs). The Kathan engine performs real-time deduplication to remove redundant context payloads, thereby optimizing the context window size, reducing token costs, and preventing model hallucination.

- Stage 4: Contextual Ranking: Not all CRM data is equally important. The pipeline ranks the filtered, deduplicated data using a specialized scoring algorithm. Active support tickets and recent purchasing behavior are weighted higher than demographic data from three years ago.

- Stage 5: Set-Algebraic Pipeline Execution: Finally, the system employs set operations (unions, intersections, and differences of data sets) to construct the perfect contextual payload. This payload is mathematically guaranteed to contain the necessary context for the LLM to formulate an accurate, business-aligned response without exceeding token limits or latency budgets.

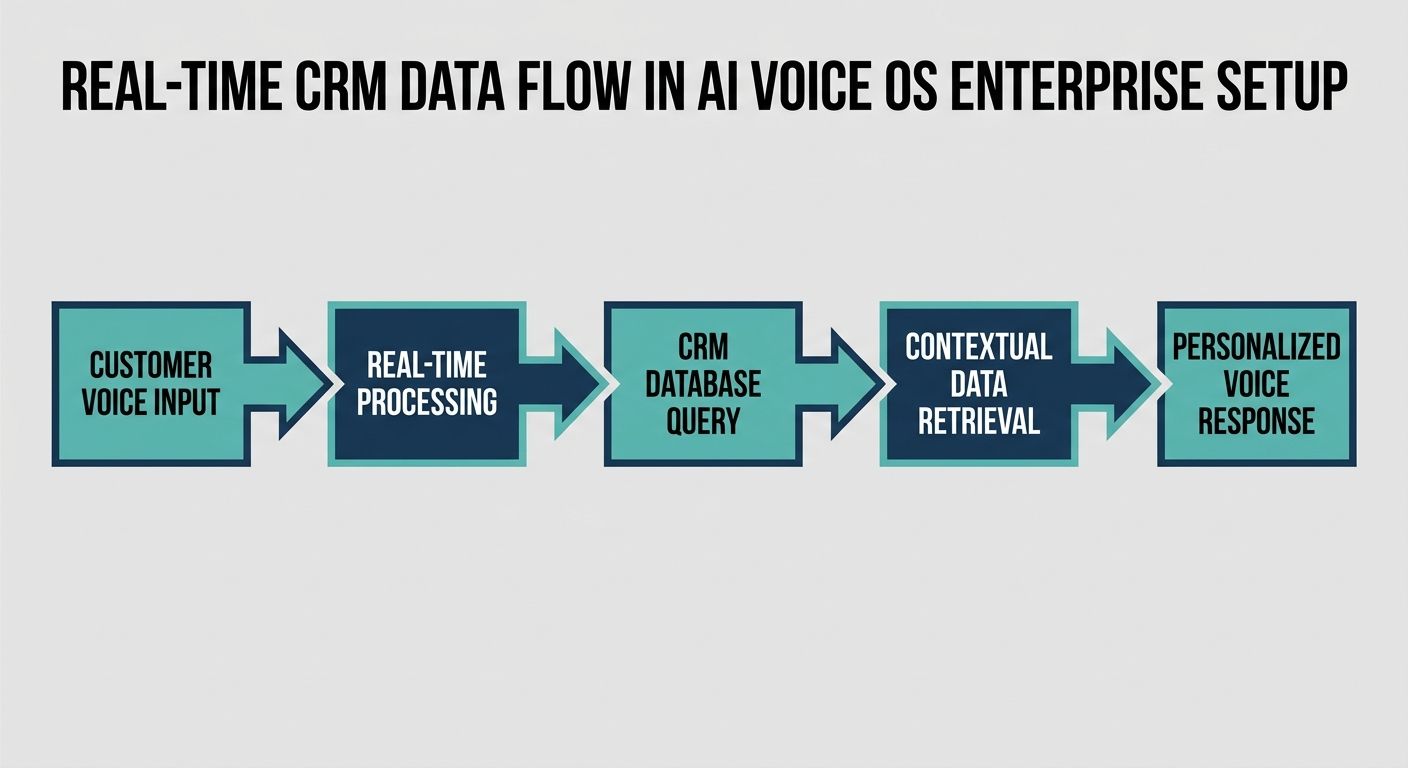

Architecting Real-Time CRM Data Flow

Achieving true real-time synchronization between an AI Voice OS and an enterprise CRM platform like Salesforce, HubSpot, or a custom-built ERP requires moving away from traditional REST API polling. In a voice environment, latency is the ultimate enemy. A delay of over 500 milliseconds creates unnatural conversational pauses, destroying the user experience. Architecting real-time flow requires advanced integration strategies.

API Strategies and Bidirectional Streaming

Enterprise AI voice platforms must utilize WebSockets and WebRTC for continuous, full-duplex communication channels. While the voice stream is processed, a parallel data stream must maintain a persistent connection with the CRM's event-driven architecture. When an enterprise customer updates a record, the CRM pushes an event via a streaming API (such as Salesforce PushTopic or Change Data Capture events). The AI Voice OS context layer intercepts this push instantly, updating the active voice session's working memory. This ensures that if a customer completes a payment on their web dashboard while speaking to the AI agent on the phone, the agent instantly acknowledges the payment without needing to place the user on hold to 'check the system'.

Managing Data Latency and Edge Compute

To keep conversational latency under the strict 500ms threshold, data latency management is paramount. Enterprise architects must implement distributed caching mechanisms (like Redis) at the network edge. Frequently accessed CRM metadata—such as customer authentication states and routing rules—should be cached geographically close to the voice ingestion nodes. Furthermore, the AI Voice OS must decouple the heavy, asynchronous CRM write operations from the synchronous voice generation loop. The system can acknowledge the user's intent, begin generating the voice response, and simultaneously write the interaction log back to the CRM in a non-blocking background thread.

Data Governance and Security Protocols for Immediate Access

Deploying an AI voice OS with real-time CRM data integration enterprise features introduces significant security considerations. Top-tier competitors frequently overlook the detailed data governance required when a conversational AI has instant, programmatic access to massive PII (Personally Identifiable Information) and PCI (Payment Card Industry) databases.

Zero-Trust Architecture and RBAC Integration

The AI Voice OS must operate on a Zero-Trust architecture. Every data request made by the context layer to the CRM must be authenticated and authorized. The system must natively mirror the CRM's Role-Based Access Control (RBAC). The AI agent should theoretically 'log in' with the exact permissions of the human agent it is augmenting or replacing. If a record is locked in the CRM, the AI must strictly inherit that restriction. The Kathan engine addresses this by integrating permission sets into its metadata filtering stage, executing a hard drop on any semantic search results that violate the synchronized access policies.

On-the-Fly PII Redaction and Compliance

For enterprises bound by HIPAA, SOC2, or GDPR regulations, streaming live voice data into an LLM presents a compliance risk. An enterprise-grade AI Voice OS solves this via an intermediary compliance layer. As live CRM data is pulled to construct the prompt, or as the user speaks sensitive information (like a Social Security Number), the architecture must utilize real-time Named Entity Recognition (NER) models to tokenize and redact PII before it reaches the foundational LLM. The tokenized response is then seamlessly re-injected into the CRM post-interaction, ensuring maximum security without sacrificing real-time contextualization.

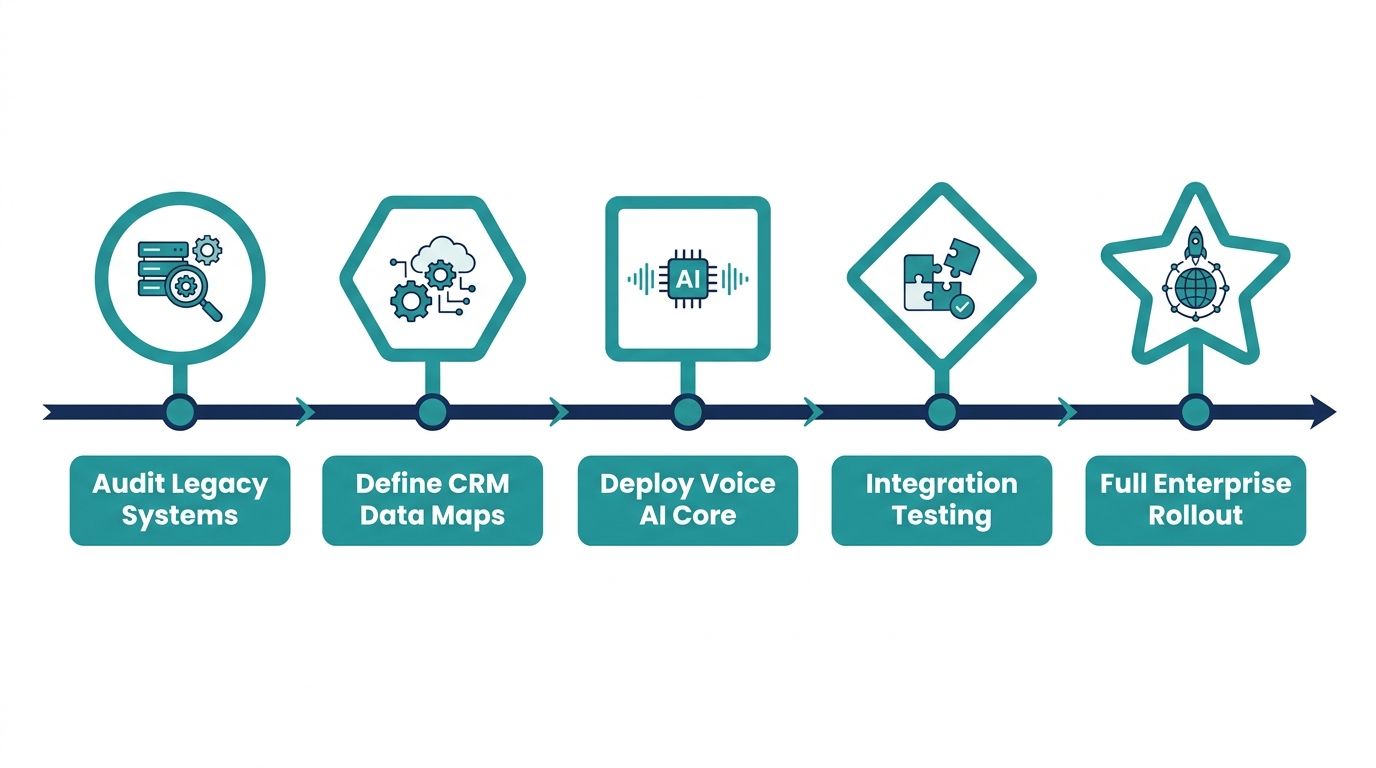

The Definitive Migration Blueprint: Implementing AI Voice OS

Transitioning from legacy IVR systems or isolated chatbots to a comprehensive, context-aware AI Voice OS requires a structured, multi-phase migration blueprint. Superficial cost-savings estimates are insufficient for enterprise technical teams. Here is the rigorous integration path required for successful deployment.

Phase 1: Technical Integration and System Audits

Before any AI model is deployed, enterprise architects must audit their existing data silos. This phase involves documenting all relevant CRM endpoints, mapping current network latencies, and evaluating API rate limits. Legacy on-premise CRMs may require the deployment of secure reverse proxies to safely expose real-time webhooks to the cloud-based AI Voice OS. During this phase, teams must also establish the target Latency Budget for the end-to-end voice loop, factoring in ASR, Context Retrieval, LLM Inference, and TTS (Text-to-Speech) generation.

Phase 2: CRM Data Mapping and Context Engineering

Once connectivity is established, technical evaluators must engage in comprehensive data mapping. This is not a simple 1:1 field mapping. It involves defining exactly which CRM objects (e.g., Contact, Account, Case, Opportunity) are necessary for specific conversational intents. Developers utilize the Kathan engine's framework to build the semantic similarity indices. This phase includes backfilling the vector database with historical interaction logs to prime the context engine, ensuring the AI Voice OS understands the historical nuance of enterprise-specific terminology and product names.

Phase 3: The Context Layer Implementation and Shadow Testing

The implementation phase focuses on deploying the context arithmetic pipeline. Rather than launching the voice agent live to customers immediately, enterprises must employ 'Shadow Testing'. In this environment, the AI Voice OS silently listens to live human-to-human call streams, actively fetching real-time CRM data and generating parallel, unseen responses. Engineers evaluate the AI's context retrieval accuracy against what the human agent actually required to solve the issue. The deduplication algorithms and ranking heuristics within the Kathan engine are fine-tuned during this phase to maximize precision and recall.

High-Impact Enterprise Use Cases for Real-Time Synchronization

The true value of an AI voice OS with real-time CRM data integration enterprise architecture becomes apparent when evaluating specific, transformative use cases that legacy solutions cannot execute.

Dynamic Customer Support Escalations

Consider an enterprise telecommunications provider. A customer calls to report an outage. A legacy IVR will ask for an account number and blindly read a generic outage message. A context-aware AI Voice OS, operating in real-time, instantly cross-references the incoming phone number with the CRM, identifies the customer as a high-value enterprise account, checks live network telemetry data via API, and correlates it with open JIRA tickets. The AI agent immediately greets the user: 'Hello, I see you are calling from the ACME Corp account. We have detected the localized fiber issue affecting your headquarters, and our engineering team has an estimated resolution time of 45 minutes.' This zero-friction, highly contextualized interaction relies entirely on sub-second CRM data integration.

Live Sales Enablement and Cross-Selling

In proactive outbound sales scenarios, an enterprise AI Voice OS utilizes real-time CRM triggers to initiate contextual conversations. If a high-value lead downloads a technical whitepaper and simultaneously opens a pricing page, the CRM triggers the Voice OS. The AI agent initiates a call, instantly pulling the lead's historical touchpoints, current company firmographics, and the exact whitepaper topic. It doesn't just read a script; it dynamically tailors the pitch based on live context arithmetic, significantly increasing conversion probabilities.

Calculating ROI: A Structured Framework Beyond Cost Savings

Top-ranking competitor content often reduces ROI to simplistic metrics like 'reduced headcount' or 'lowered operational costs.' For enterprise technical evaluators, ROI must be calculated through a rigorous, multidimensional framework that captures the true financial impact of real-time context integration.

Metric 1: Specific Cost Analysis via CPI Reduction

Instead of generic savings, calculate the precise Cost Per Interaction (CPI). By utilizing an AI Voice OS with real-time CRM data integration, enterprises significantly reduce the Average Handling Time (AHT). Every second an agent spends placing a user on hold to 'load their profile' is a hard financial cost. The Kathan engine eliminates this CRM retrieval latency entirely. If an enterprise handles 100,000 calls monthly, reducing AHT by 45 seconds per call through instant context retrieval yields measurable, guaranteed cost avoidance that can be modeled in a financial spreadsheet.

Metric 2: First Contact Resolution (FCR) Enhancement

Legacy AI agents often fail and escalate to human agents because they lack the necessary context to complete complex workflows. This double-touch drastically increases operational costs. By leveraging the real-time context arithmetic pipeline, the AI Voice OS can confidently execute multi-step CRM updates (e.g., processing a return, updating a shipping address, and issuing a prorated refund simultaneously). An enterprise tracking FCR can directly attribute a percentage increase in autonomous resolutions directly to the depth of the CRM integration, assigning a concrete revenue retention value to the AI implementation.

Conclusion: Future-Proofing with Context-Aware Voice AI

Implementing an AI voice OS with real-time CRM data integration enterprise capabilities is not merely an IT upgrade; it is a fundamental re-architecting of how a business interacts with its customers. Superficial AI voice agents are rapidly becoming commoditized. The true competitive moat lies in context engineering, robust data governance, and instantaneous synchronization with backend enterprise systems.

By leveraging advanced architectural frameworks like Alchemyst's Kathan engine and adhering to a rigorous migration blueprint, technical teams can successfully deploy voice platforms that operate with the intelligence, speed, and security demanded by the modern enterprise. Mastering context arithmetic and real-time CRM data flow is the definitive path to achieving an unparalleled, highly profitable automated conversational ecosystem.