The Strategic Imperative of AI Voice OS Adoption

In the modern enterprise landscape, conversational AI has transcended basic text chatbots and rudimentary Interactive Voice Response (IVR) systems. Today, organizations are pivoting toward highly sophisticated AI Voice Operating Systems (OS) capable of executing complex, multi-turn dialogues with human-like latency and deep contextual awareness. However, the transition from legacy telecommunications infrastructure to a next-generation voice agent platform is a monumental operational shift. To ensure a successful digital transformation, enterprise leaders require a comprehensive AI Voice OS migration blueprint and ROI calculation for businesses. This definitive guide bridges the critical gap between theoretical AI capabilities and practical, financially viable enterprise integration.

Top-ranking industry resources often highlight the generic cost savings of AI, but they frequently overlook the intricate technical integration processes, rigorous data migration requirements, and nuanced ROI frameworks necessary for true enterprise-scale deployment. By focusing on advanced information retrieval architectures—specifically context engineering over rudimentary prompt engineering—businesses can deploy voice agents that actually augment human capabilities, automate repetitive workflows, and capture rich qualitative feedback during every customer interaction.

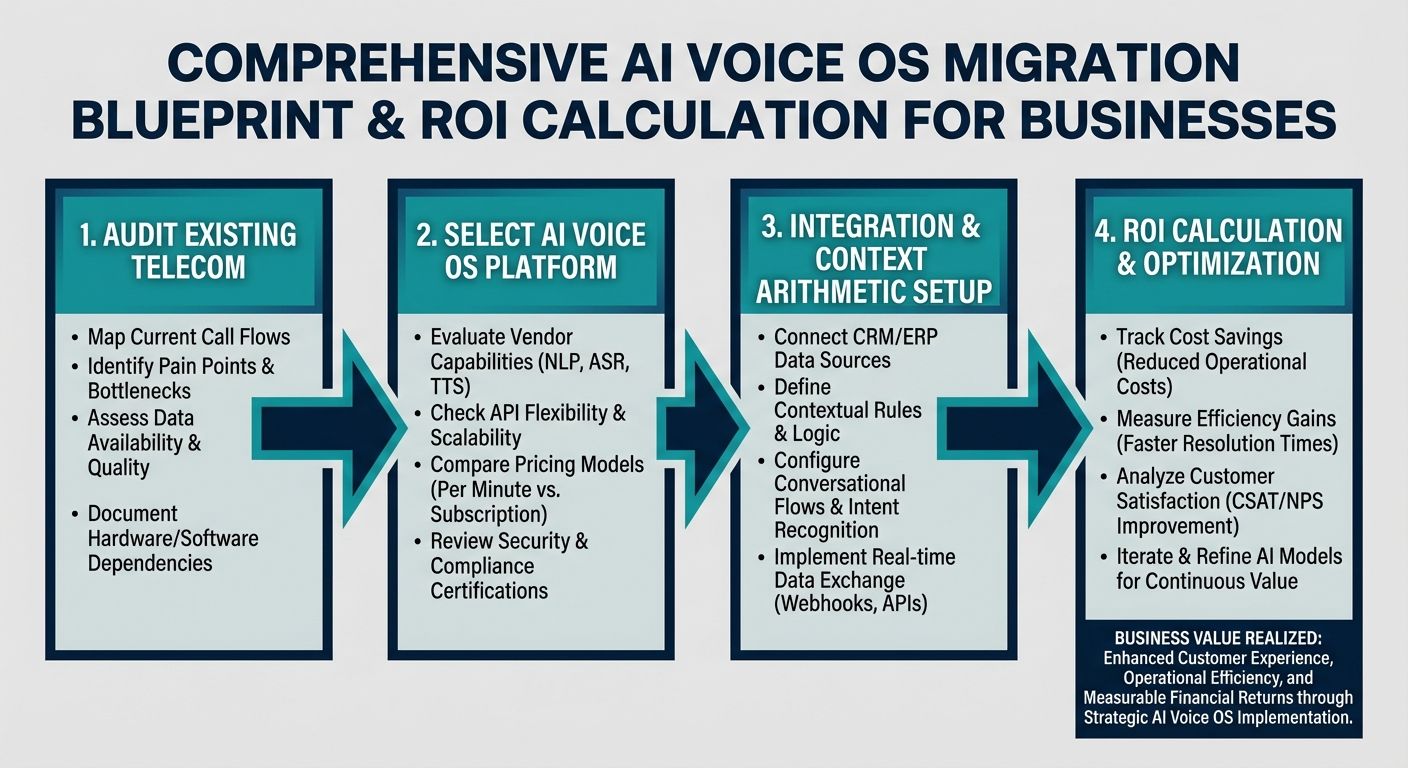

The Definitive AI Voice OS Migration Blueprint

Executing a seamless migration to an AI Voice OS demands a highly structured, phased approach. Unlike plug-and-play software, deploying enterprise voice AI involves deeply integrating the conversational engine with existing Customer Relationship Management (CRM) platforms, telephony SIP trunks, and backend databases. Here is the step-by-step blueprint for a robust technical integration.

Phase 1: Architectural Assessment and Telephony Mapping

The migration lifecycle begins with a rigorous audit of your current communication architecture. Enterprises must document their existing telephony infrastructure, mapping out SIP (Session Initiation Protocol) trunks, existing IVR call flows, and agent routing logic. During this phase, businesses must define the precise operational constraints their AI Voice OS will operate within. This involves establishing latency budgets (typically aiming for sub-500 millisecond response times to maintain conversational fluidity) and identifying the necessary API endpoints that the AI will need to query in real-time.

Crucially, this phase requires a shift in design philosophy from traditional decision-tree mapping to contextual boundary definition. Instead of explicitly programming every possible path a user might take, developers must define the knowledge base, the API toolkit, and the operational guardrails the AI agent will utilize to dynamically navigate conversations.

Phase 2: Context Engineering and Data Migration

Once the architecture is mapped, the focus shifts to data migration and context engineering. Many early AI deployments failed because they relied heavily on static prompt engineering, which leads to robotic-sounding and error-prone agents. Advanced platforms, such as Alchemyst's Kathan engine, utilize a superior paradigm known as context engineering.

During data migration, unstructured enterprise data (such as product manuals, policy documents, and historical support transcripts) must be ingested, chunked, and vectorized into high-performance vector databases. Simultaneously, structured CRM data (customer profiles, purchase histories, and real-time account statuses) must be seamlessly connected via secure API gateways. The AI Voice OS must be able to pull this real-time metadata seamlessly to inform its conversational trajectory, ensuring that every response is hyper-personalized and historically informed.

Phase 3: Security, Compliance, and Pipeline Testing

Enterprise voice data is incredibly sensitive. The migration blueprint must incorporate stringent security protocols, including real-time Personally Identifiable Information (PII) redaction before any audio data is transcribed and sent to the Large Language Model (LLM). Compliance with frameworks such as SOC 2, HIPAA, and GDPR is non-negotiable.

Before moving to production, organizations must conduct rigorous pipeline testing. This involves simulating thousands of concurrent calls to stress-test telephony APIs, measure vector search retrieval latencies, and evaluate the contextual accuracy of the AI's responses. A phased rollout—starting with a low-risk subset of inbound queries—allows businesses to monitor system behavior and refine the context engine before full-scale deployment.

The Technical Engine: Context Arithmetic for Voice

To truly understand how a modern AI Voice OS operates during migration, technical evaluators must look under the hood at the information retrieval mechanisms. The competitive advantage of platforms like Alchemyst lies in their advanced architecture, specifically a computational process known as Context Arithmetic. This process systematically determines the most relevant information to feed the voice agent in real-time, preventing hallucinations and ensuring precise responses.

Overcoming Robotic Responses Through Dynamic Retrieval

Standard voice agents sound robotic because they operate on rigid scripts or overly broad LLM prompts. Context arithmetic solves this by treating contextual retrieval as a set-algebraic pipeline. As a user speaks, the Kathan engine performs rapid calculations to curate the exact set of facts, guidelines, and customer data required for that specific millisecond of the conversation.

The Five-Stage Context Determination Pipeline

For developers and technical teams managing the migration, integrating this five-stage pipeline is the cornerstone of a successful deployment:

- 1. Semantic Similarity Search: When a user asks a question, the system queries the vector database to find the most conceptually similar knowledge chunks. This ensures the AI understands the intent behind the words, rather than just keyword matching.

- 2. Metadata Filtering: The semantic search results are instantly filtered against hard constraints. For example, if a user is calling about an enterprise software tier, the system filters out all documentation related to the consumer tier, ensuring the AI only accesses relevant data.

- 3. Set-Algebraic Pipeline: The system performs complex intersections and unions of different context spaces. It merges the user's real-time CRM state with the retrieved product knowledge, creating a unified, multi-dimensional context profile.

- 4. Deduplication: To optimize LLM processing speed and reduce token costs, the engine systematically strips out redundant instructions or overlapping data points retrieved from various databases.

- 5. Ranking: Finally, the remaining context blocks are scored and ranked based on immediate relevance. Only the top-tier, most critical information is injected into the AI's active memory for its next spoken response.

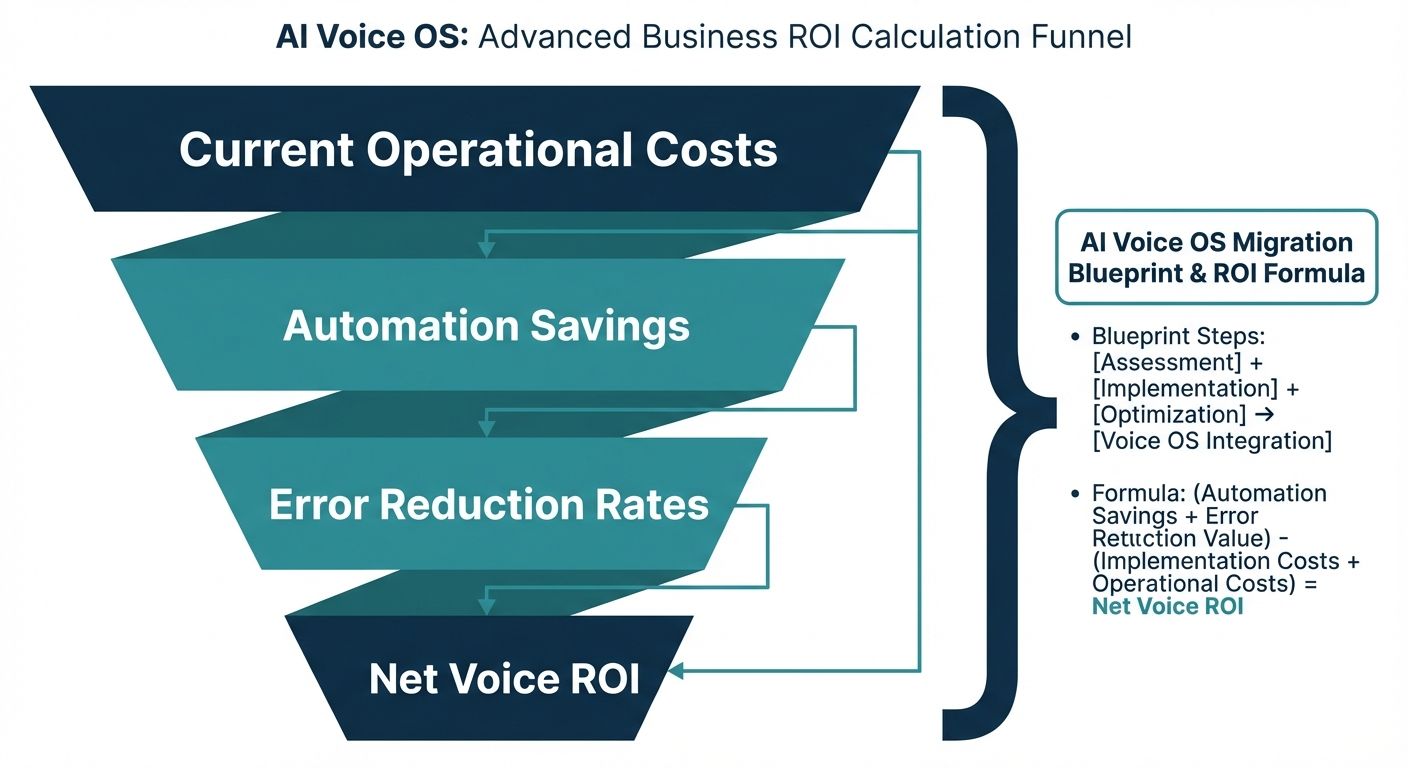

Advanced ROI Calculation for Businesses

Constructing a compelling business case for an AI Voice OS requires a structured ROI framework that extends far beyond generic cost savings. While reducing headcount or deflecting calls is often the primary focus, a robust AI Voice OS migration blueprint and ROI calculation for businesses must capture the comprehensive financial impact of contextual automation.

Moving Beyond Generic Cost-Per-Minute Metrics

Traditional BPO (Business Process Outsourcing) and legacy SaaS voice platforms often utilize minute-based billing. However, this model misaligns incentives, as longer calls generate more revenue for the vendor. When calculating the ROI of an advanced AI Voice OS, businesses must transition their financial modeling to focus on resolution efficiency and outcome generation.

The Cost Per Qualified Outcome (CPQO) Model

The most accurate metric for evaluating voice AI ROI is the Cost Per Qualified Outcome (CPQO). This model shifts the financial focus from time spent to value delivered. To calculate this, businesses should use the following structured framework:

- Step 1: Calculate Total Migration & Integration CapEx: Include the costs of the architectural audit, data vectorization, API integration, and initial context engineering setup.

- Step 2: Calculate Ongoing OpEx: Factor in LLM token costs, telephony/SIP trunking fees, vector database hosting, and continuous optimization costs.

- Step 3: Define Qualified Outcomes: A qualified outcome is a specific, high-value resolution, such as a successfully booked appointment, a processed payment, a verified technical troubleshooting session, or a qualified lead passed to sales.

- Step 4: Execute the ROI Formula: Divide the total operational cost over a specific period by the volume of qualified outcomes achieved by the AI. Compare this CPQO against the historical cost of a human agent achieving the exact same outcome.

By using the CPQO model, enterprise leaders can definitively prove that the AI Voice OS is not merely a cost-center reduction tool, but a highly efficient, scalable engine for business outcomes.

Measuring Soft Savings and Qualitative Value

A comprehensive ROI calculation must also quantify soft savings. Unlike traditional IVRs, an advanced AI Voice OS captures rich qualitative feedback and performs immediate sentiment analysis during collection. This means every call acts as an automated focus group. The financial value of extracting real-time product feedback, identifying emerging customer churn risks through sentiment scoring, and capturing granular user preferences directly impacts product development and customer retention strategies, adding massive, quantifiable value to the overall ROI equation.

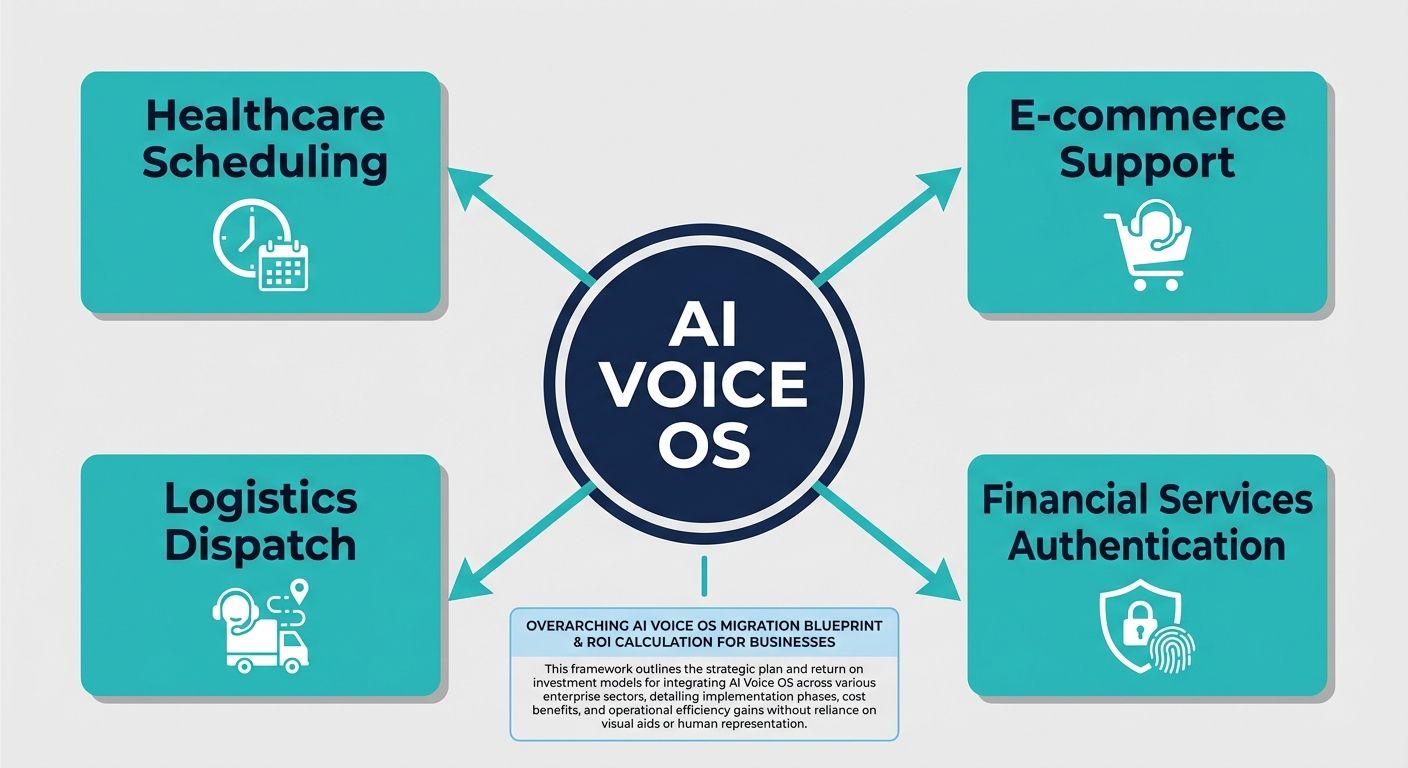

Concrete Industry-Focused Use Cases for AI Voice OS

To contextualize the migration blueprint and ROI frameworks, it is essential to examine specific, industry-focused use cases where context-aware AI voice agents deliver transformative business value.

Enterprise Customer Service and Technical Support

In high-volume enterprise tech support, human agents frequently suffer from burnout due to repetitive password resets and basic troubleshooting. An AI Voice OS integrated with a company's internal knowledge base and user authentication APIs can independently resolve these Tier 1 and Tier 2 issues. By leveraging the metadata filtering of the context engine, the AI can securely verify the user's identity, read real-time system outage data, and guide the user through complex diagnostic steps. The ROI is realized through massive deflection rates and the strategic reallocation of human engineers to highly complex, escalated issues—embodying true human-AI augmentation.

Financial Services and Debt Collections

In the financial sector, an AI Voice OS fundamentally alters outbound operations such as debt collections or loan status updates. Because conversations involving finances require high empathy and absolute factual accuracy, prompt-engineered bots fail. Context-engineered agents excel here. The AI can securely pull the exact arrearage amount, calculate settlement options on the fly using set-algebraic logic, and negotiate payment terms within strict compliance boundaries. The ROI calculation in this sector is remarkably straightforward: the cost of the AI deployment versus the total reclaimed revenue and the reduction in compliance violation fines.

Healthcare Patient Scheduling and Triage

For large healthcare networks, patient no-shows and inefficient scheduling are massive drains on profitability. A HIPAA-compliant AI Voice OS can manage inbound calls, utilizing semantic similarity to understand a patient's symptoms, referencing physician availability via secure API, and booking the appointment. Furthermore, the AI can execute proactive outbound calls to confirm appointments, dramatically reducing no-show rates. The ROI is calculated by measuring the increase in physician utilization rates and the reduction in administrative staffing costs.

Conclusion: Executing the Transition to Context-Aware AI

Developing a successful AI Voice OS migration blueprint and ROI calculation for businesses is an essential undertaking for modern enterprises. By abandoning outdated conversational paradigms and embracing deep technical architectures like context arithmetic, organizations can build highly intelligent, responsive voice agents that truly understand the customer lifecycle. By meticulously planning the data migration, enforcing strict security standards, and evaluating success through advanced financial models like the Cost Per Qualified Outcome, businesses can confidently justify their AI investments. Ultimately, the transition to an AI Voice OS is not about replacing human talent; it is about augmenting enterprise capabilities, unlocking unprecedented operational efficiency, and delivering highly contextual, deeply engaging customer experiences at infinite scale.