The Paradigm Shift in Enterprise Voice AI Economics

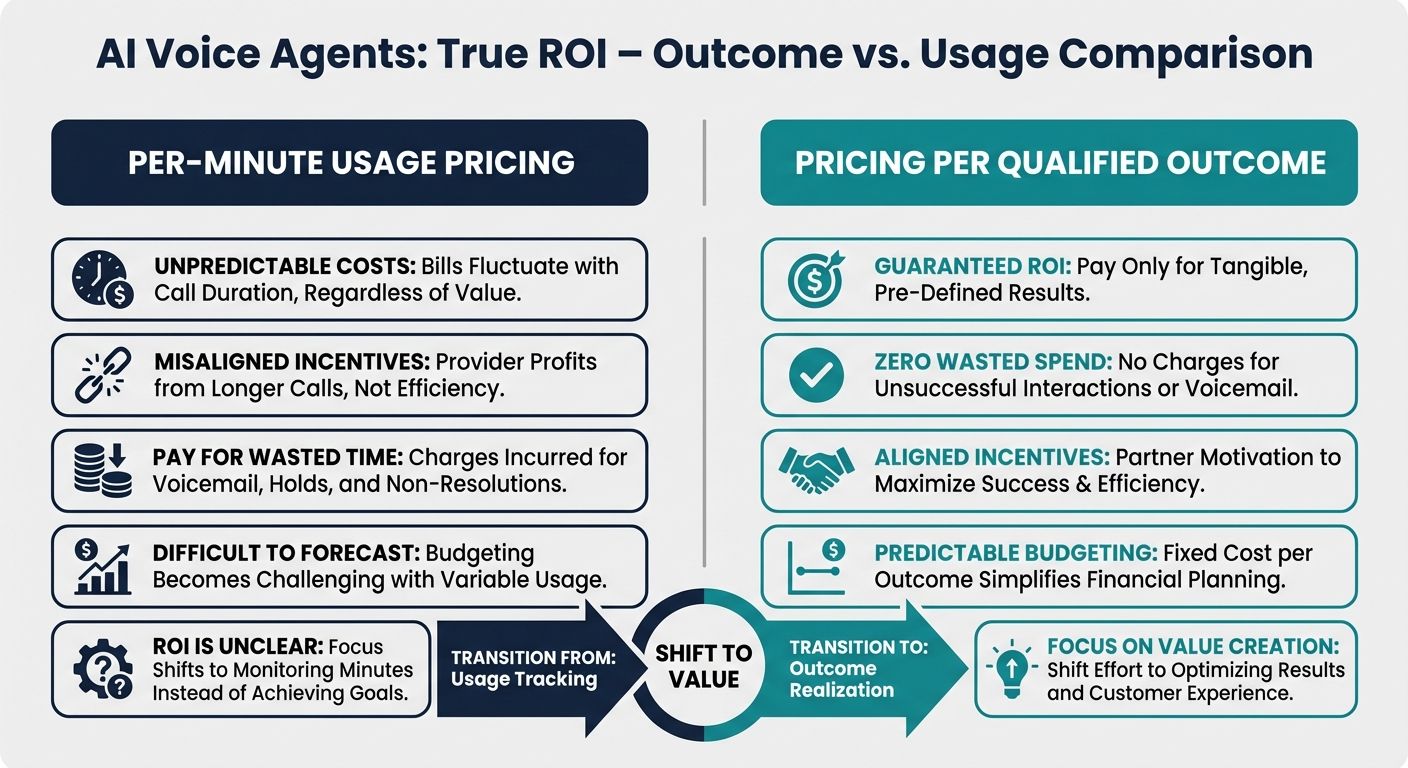

In the rapidly expanding ecosystem of enterprise artificial intelligence, businesses are aggressively deploying conversational systems to streamline customer service, sales, and internal operations. However, technical evaluators and enterprise leaders are discovering a critical flaw in the modern AI landscape: the financial models governing these deployments are fundamentally broken. The overwhelming majority of vendors operate on usage-based metrics, billing clients per minute, per API call, or per seat. This structure penalizes businesses for complex customer interactions and incentivizes vendors to deploy inefficient, context-free systems that keep callers on the line longer.

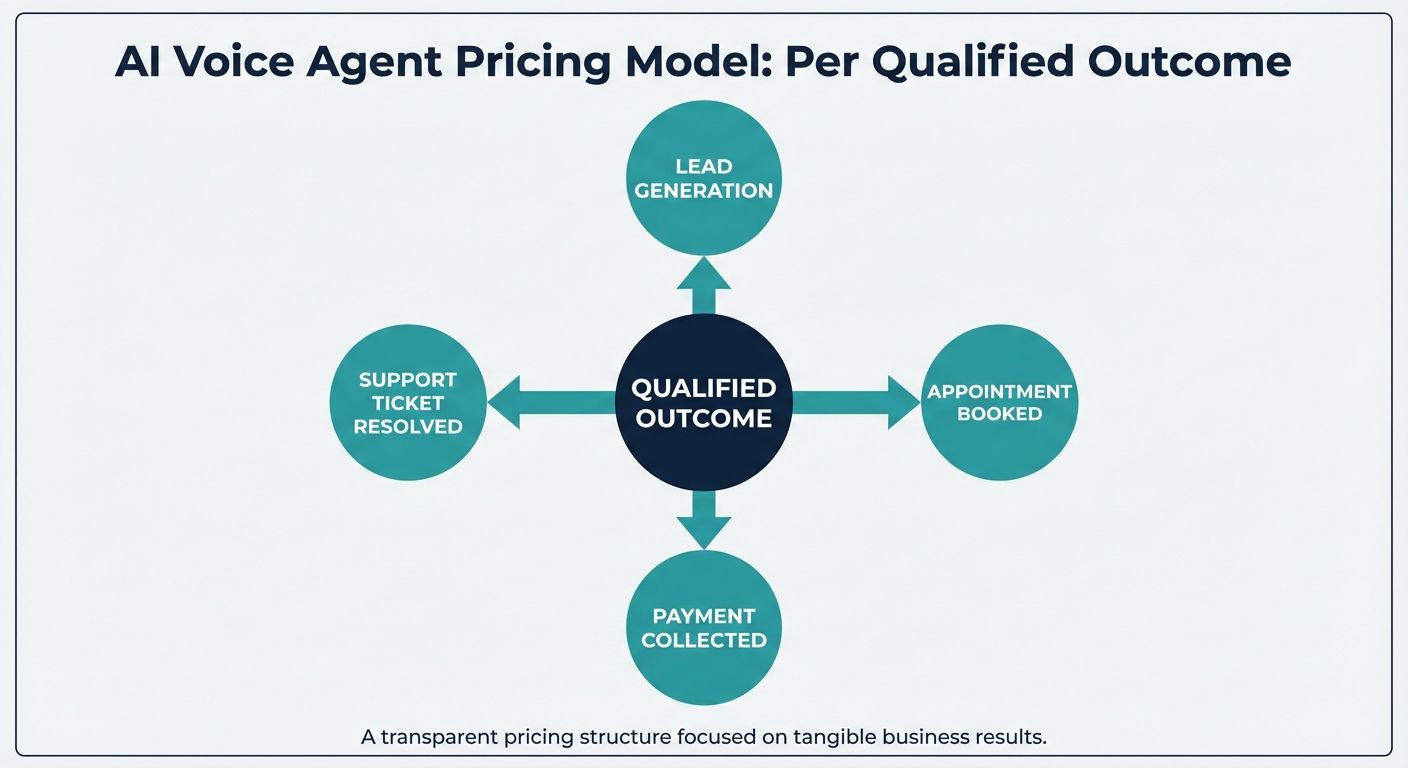

To combat this structural inefficiency, forward-thinking enterprises are migrating toward a revolutionary financial and operational framework: the AI voice agent pricing model per qualified outcome. This commercial intent model shifts the paradigm from paying for computational effort to paying for actual business value. Rather than bleeding capital on conversational dead-ends and AI hallucinations, businesses only pay when the voice agent successfully achieves a predefined, highly specific goal. In this comprehensive technical and commercial blueprint, we will dissect the structural flaws of legacy pricing, outline the definitive migration path to an outcome-based Voice OS, and explore the deep architectural requirements—such as Alchemyst's Kathan engine and its proprietary context arithmetic—that make guaranteed outcomes computationally possible.

The Structural Flaws of Traditional Pricing Models

Before understanding why the AI voice agent pricing model per qualified outcome is gaining immense traction among enterprise technical evaluators, it is crucial to analyze the historical and conceptual failures of existing Voice AI deployments. The market is currently saturated with platforms that employ a product-centric, API-first approach, promoting themselves as highly configurable tools for developers. While platforms like Vapi or Retell offer robust developer communities and self-service dashboards, their commercial models harbor hidden costs that severely degrade enterprise ROI.

Per-Minute Billing: Incentivizing Inefficiency

The most pervasive pricing structure in the Voice AI industry is the per-minute billing model. At first glance, this appears to be a fair, consumption-based metric. However, it creates a perverse incentive. When vendors charge by the minute, their revenue increases as the length of the call increases. Context-free agents—systems that lack deep historical memory and real-time enterprise data retrieval—often trap users in frustrating conversational loops. They ask repetitive questions, struggle with nuanced user intent, and take excessively long to process simple requests. Under a per-minute model, the enterprise absorbs the financial penalty for the AI's incompetence.

Per-Seat Subscription Traps

Another common approach is the per-seat subscription, heavily utilized by unified communications platforms. This model typically grants businesses access to an AI assistant for a flat monthly fee per human agent. While this makes budgeting predictable, it entirely divorces the cost of the software from the value it generates. If the AI agent fails to resolve a single customer ticket, deflect a meaningful volume of calls, or successfully process an inbound lead, the enterprise is still contractually obligated to pay the subscription fee. This generic cost structure lacks the strict accountability demanded by modern ROI frameworks.

The Hidden Costs of Context-Free Agents

Beyond the baseline subscription or per-minute rates, enterprises face immense hidden costs when deploying standard API voice solutions. Because these platforms primarily provide the infrastructure to build agents, they push the technical burden of context management onto the client's engineering teams. Businesses must spend hundreds of thousands of dollars developing custom middleware to handle data migration, secure integration, and prompt engineering. Without native context handling capabilities, these AI agents suffer from chronic memory loss, leading to catastrophic customer experiences and abandoned calls. The actual total cost of ownership skyrockets far beyond the vendor's advertised rates.

Defining the AI Voice Agent Pricing Model Per Qualified Outcome

The AI voice agent pricing model per qualified outcome fundamentally realigns the economic relationship between the enterprise and the AI vendor. In this model, the business dictates the specific criteria for success, and the vendor is only compensated when the AI autonomously fulfills those criteria. A qualified outcome is not merely a completed call or a transcribed conversation; it is a measurable, definitive business action that directly impacts the company's bottom line.

By adopting this model, enterprises completely eliminate the financial risk associated with AI hallucinations, dropped calls, and failed intent resolutions. If a caller hangs up out of frustration, the AI failed, and the enterprise pays nothing. If the AI correctly navigates a complex, multi-turn conversation and successfully executes an API payload to update a CRM, the outcome is achieved, and billing is triggered. This forces the underlying AI architecture to be ruthlessly efficient, highly accurate, and deeply integrated with the enterprise's backend systems.

Concrete Industry Use Cases for Qualified Outcomes

To implement a structured ROI framework beyond generic cost savings, businesses must define exactly what constitutes a qualified outcome within their specific vertical. The flexibility of the AI voice agent pricing model per qualified outcome allows for highly tailored definitions of success.

Healthcare and Patient Triage

In the healthcare sector, standard AI bots often fail due to a lack of HIPAA-compliant context retention and the inability to navigate complex patient histories. A qualified outcome in this vertical might be defined as: The successful authentication of a patient, accurate symptom intake matching predefined triage protocols, and the confirmed scheduling of an appointment within the EHR system. If the AI must transfer the call to a human nurse because it cannot resolve the patient's intent, the outcome is not qualified.

Financial Services and Fraud Prevention

For banking and financial institutions, security and exact execution are paramount. An API-first voice tool that hallucinates a routing number is a catastrophic liability. Here, a qualified outcome could be defined as: The successful multi-factor verification of a caller's identity, the accurate retrieval of recent transaction history, and the complete execution of a reported fraud ticket resulting in a frozen card status. Only when the core banking API confirms the freeze does the AI platform earn its fee.

E-commerce and Retail Logistics

E-commerce businesses suffer massive overhead from repetitive order status inquiries and return processing. Under a qualified outcome pricing model, a vendor is paid when the AI agent successfully locates an order via metadata filtering, clearly communicates the real-time shipping status to the customer, and processes a highly specific return merchandise authorization (RMA) without requiring a human agent's intervention. A simple conversation that ends with "I don't know" is financially penalized under this model.

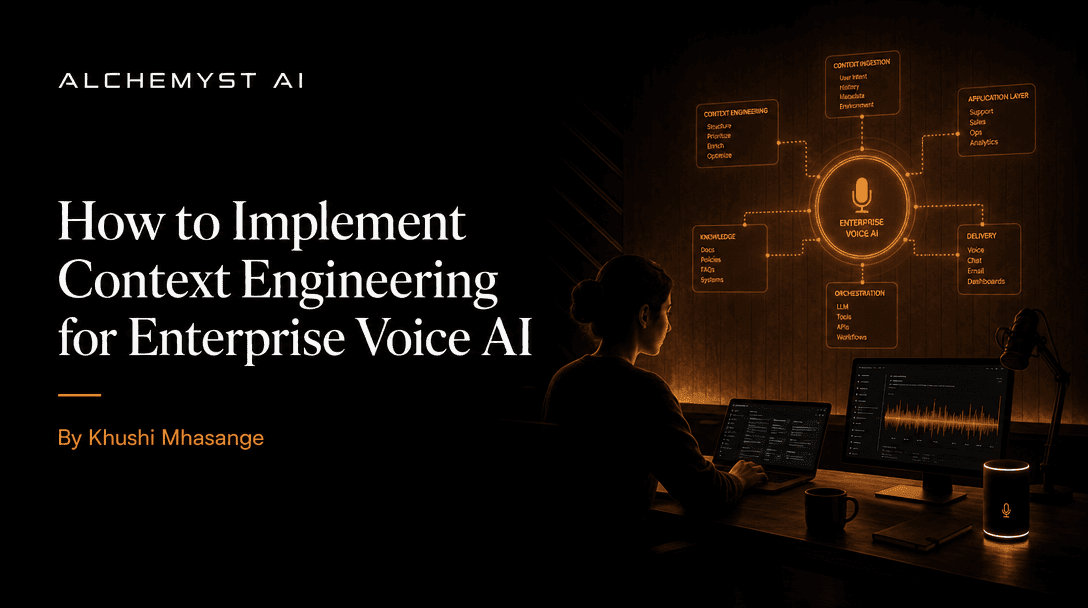

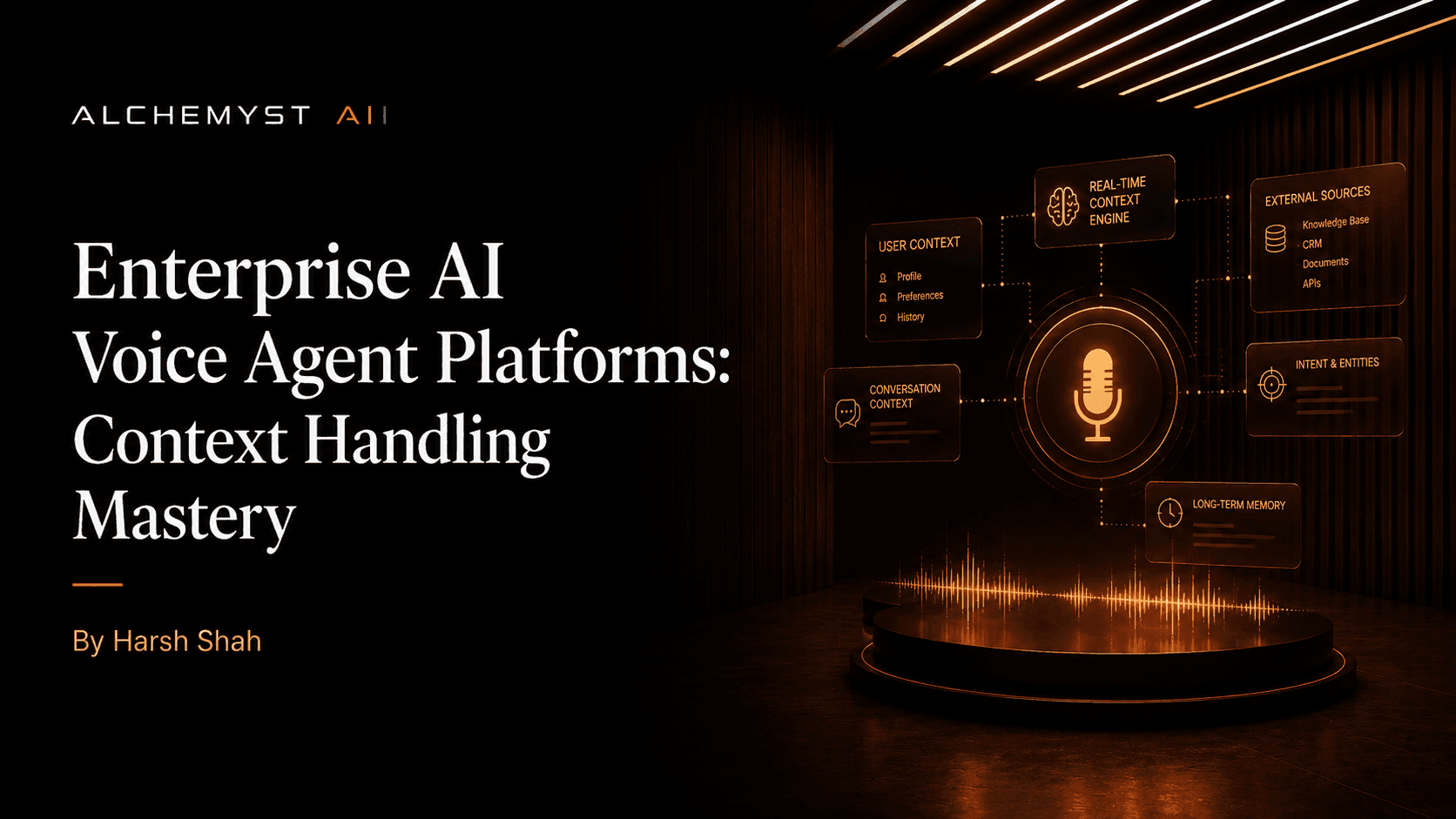

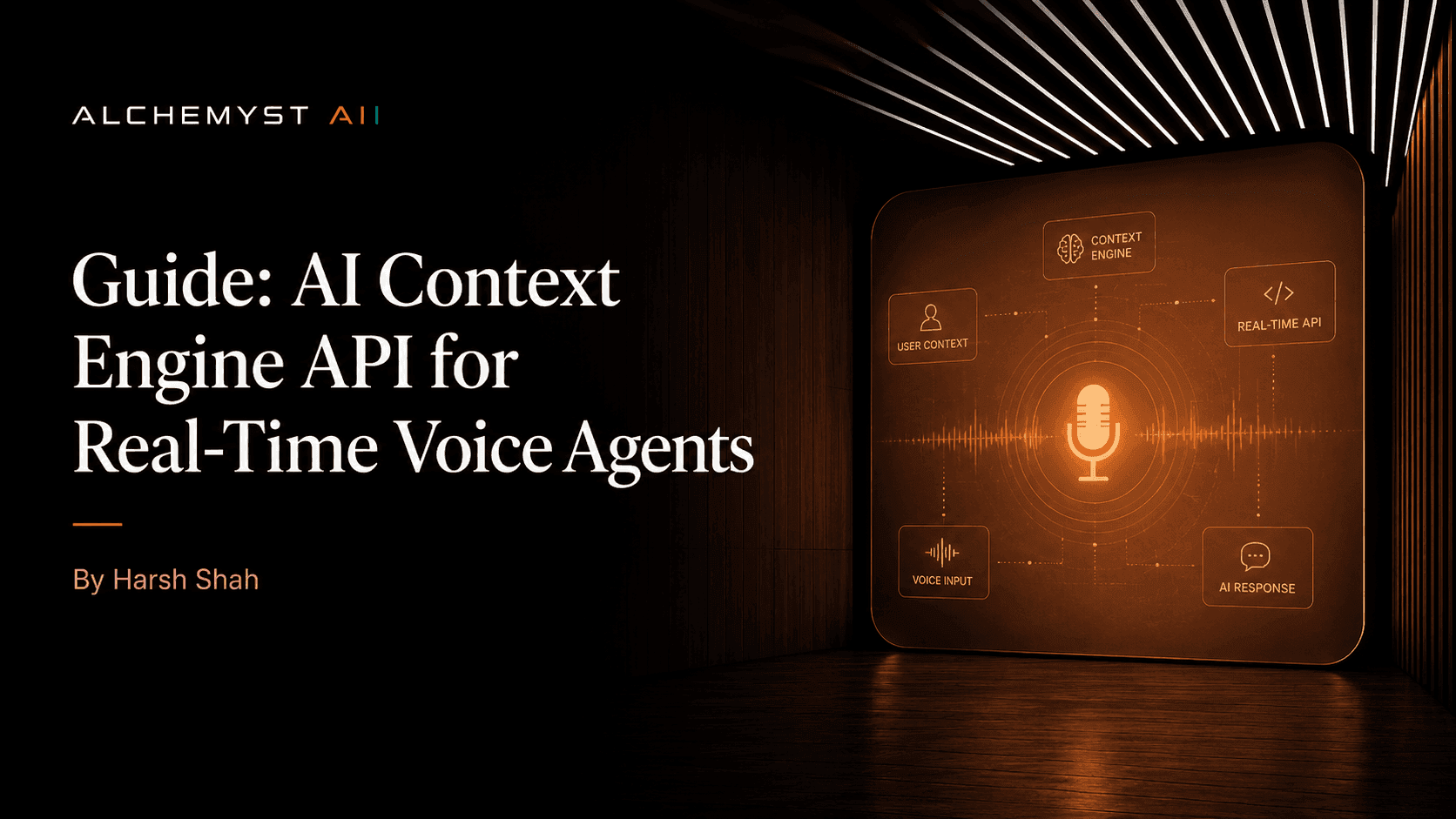

The Technical Engine Behind Guaranteed Outcomes

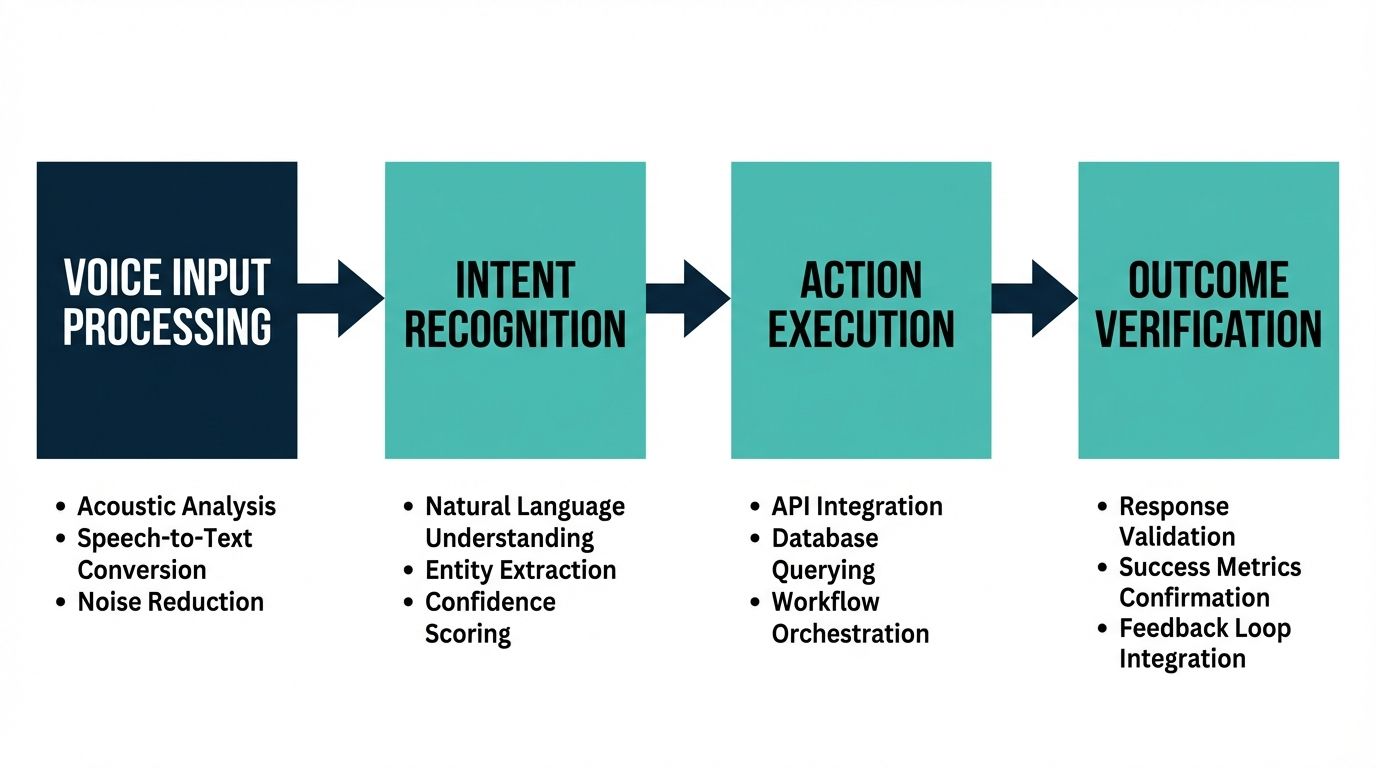

A critical gap in top-ranking evaluations of AI pricing models is the failure to explain how a vendor can mathematically guarantee outcomes. Standard Large Language Models (LLMs) are inherently probabilistic; they guess the next most likely word. To operate under an AI voice agent pricing model per qualified outcome, the system must shift from probabilistic guessing to deterministic execution. This requires advanced information retrieval and state-of-the-art context engineering.

Alchemyst's Kathan Engine and Context Arithmetic

To achieve the high success rates necessary to survive under an outcome-based pricing model, systems like Alchemyst's Kathan engine utilize a process known as context arithmetic. This is a highly rigorous, set-algebraic computational pipeline designed to systematically determine relevant information for voice agents in milliseconds. Unlike generic prompt engineering, which relies on trial-and-error text instructions, context engineering mathematically restricts the AI's operational boundaries.

The Five-Stage Pipeline for Context Determination

For an AI voice agent to achieve a qualified outcome, it must process enterprise data flawlessly in real-time. The Kathan engine accomplishes this through an advanced five-stage pipeline:

- Information Retrieval and Ingestion: The system securely ingests massive volumes of unstructured enterprise data, structuring it into high-dimensional vector embeddings optimized for voice-specific queries.

- Semantic Similarity Search: When a user speaks, the engine instantly queries the vector database, identifying the closest mathematical matches to the user's intent, ignoring conversational filler and extracting core meaning.

- Metadata Filtering: The system applies strict deterministic rules—filtering out data that does not belong to the user's specific account or access level, ensuring perfect data security.

- Deduplication via Set Algebra: To prevent the LLM from becoming confused by conflicting information, the Kathan engine mathematically intersects and subtracts contextual payloads, leaving only a singular, definitive truth for the agent to reference.

- Dynamic Ranking and Payload Assembly: The highly refined data is ranked by immediate relevance and injected into the voice agent's operational memory, allowing it to speak with human-like confidence and absolute factual accuracy.

Without this deep, architectural commitment to context handling capabilities, an AI vendor simply cannot afford to offer an AI voice agent pricing model per qualified outcome. Standard developers relying on generic APIs lack the middleware infrastructure to execute this five-stage pipeline effectively, resulting in failed outcomes and abandoned migrations.

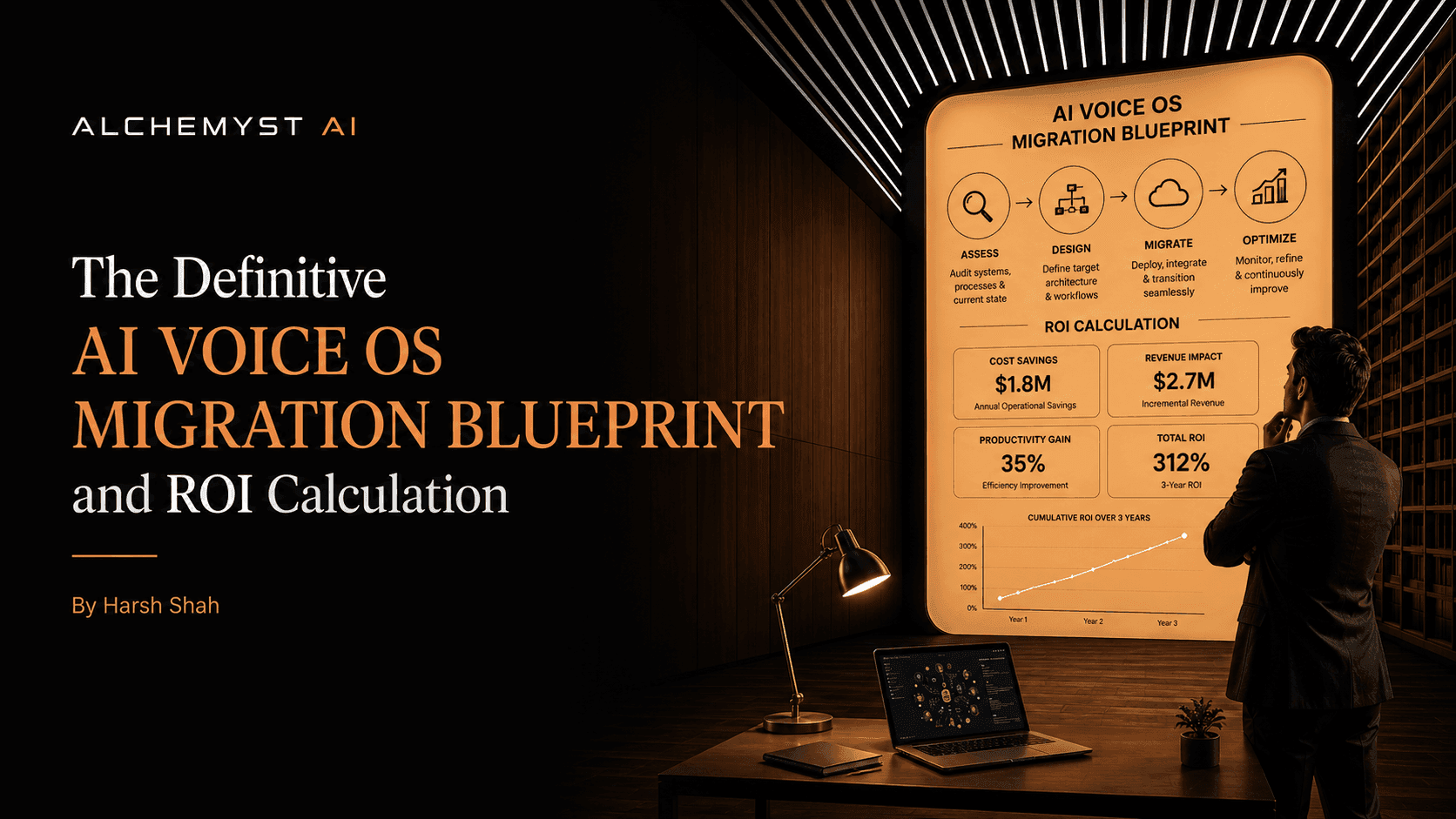

The Definitive Migration Blueprint: Implementing AI Voice OS

Transitioning from a legacy per-minute contact center or a rudimentary API script to a context-aware AI Voice OS requires a comprehensive migration blueprint. Enterprises dissatisfied with existing voice AI deployments must approach this transition with a structured framework designed to maximize ROI.

Step 1: Technical Integration and Data Migration

The foundation of outcome-based AI is deeply integrated enterprise knowledge. The migration begins with a thorough audit of existing data silos: CRM platforms, knowledge bases, ticketing systems, and internal wikis. Utilizing robust integration protocols, this data is continuously synced with the Voice OS's context engine. Unlike API-first platforms that require developers to build custom data connectors, enterprise-grade solutions provide secure, out-of-the-box ingestion pipelines that automate the transition of historical context.

Step 2: Defining the ROI Framework and Success Metrics

Before full deployment, technical evaluators and business leaders must collaboratively define exactly what constitutes a qualified outcome. This involves mapping out the entire customer lifecycle and identifying high-friction touchpoints. Metrics must be strictly boolean (True/False): Did the agent book the meeting? Did the agent resolve the billing dispute? Did the agent capture the lead's email and budget? Establishing these hard parameters ensures the AI voice agent pricing model per qualified outcome is contractually enforceable.

Step 3: Advanced Security and Compliance Deployment

Enterprise deployments mandate uncompromising security. The migration blueprint must include thorough penetration testing, SOC2 compliance verification, and data residency audits. Because context-aware AI processes highly sensitive conversational data, the architecture must ensure that vector embeddings and conversational logs are isolated per tenant. Proper metadata filtering within the context arithmetic pipeline ensures that no user can artificially manipulate the agent into revealing another user's PII (Personally Identifiable Information).

Calculating True ROI: Outcome vs. Usage

To truly understand the commercial advantage of the AI voice agent pricing model per qualified outcome, we must conduct a specific cost analysis. Consider an enterprise processing 50,000 inbound customer service calls per month.

Under a standard vendor using a per-minute model at $0.15 per minute, an average call length of 6 minutes results in a base cost of $0.90 per call. However, because context-free agents struggle with intent, 40% of these calls result in a failure or escalation. The enterprise pays $45,000 to the AI vendor, but still must pay human agents to handle the 20,000 failed calls. The actual ROI is highly diluted by the cost of failure.

Conversely, utilizing an outcome-based model, the vendor may charge $1.50 per qualified outcome. While the per-unit cost appears higher, the enterprise only pays for success. If the Kathan engine successfully resolves 35,000 calls autonomously, the cost is $52,500. However, the enterprise has absolute certainty that 35,000 human agent interactions were completely eliminated. There is zero wasted spend on the 15,000 calls that required human escalation. The ROI is perfectly protected, predictable, and structurally tied to business value.

Why Developer-First APIs Miss the Enterprise Mark

As businesses evaluate their AI strategies, it is essential to recognize the gaps left by competitors in the market. Many top-tier platforms promote themselves as the ultimate developer playgrounds. They offer open dashboards, extensive documentation, and a toolkit to build custom agents. However, they consistently miss the mark on providing a structured ROI framework. By merely supplying the computational "picks and shovels," these vendors absolve themselves of the final business result.

Enterprises are realizing that they do not want to be in the business of building and maintaining voice agents; they want to buy resolved customer problems. The AI voice agent pricing model per qualified outcome is the antidote to the API-first trap. It forces the vendor to transition from a software provider into a strategic partner whose revenue is directly coupled to the client's operational success.

Conclusion: The Inevitable Future of Voice AI

The transition toward the AI voice agent pricing model per qualified outcome is not merely a pricing trend; it is an inevitable evolution in enterprise technology. As Large Language Models become commoditized, the true differentiator lies in context engineering, proprietary retrieval pipelines like Alchemyst's Kathan engine, and commercial models that guarantee results. By demanding outcome-based pricing, businesses can eliminate wasted capital, mandate architectural excellence from their vendors, and deploy voice AI systems that act as genuine drivers of revenue and operational efficiency.