The Crisis in Global Customer Engagement

As enterprises scale globally, the demand for seamless, localized customer support has never been higher. Yet, businesses deploying conventional AI chatbots and basic voice agents quickly encounter severe operational bottlenecks. The mandate for a true multilingual AI voice OS for high volume customer interactions is clear, but the market is flooded with superficial solutions. Most commercial off-the-shelf tools serve merely as informational band-aids, relying heavily on basic APIs and static scripting rather than robust, state-aware conversational intelligence. For businesses dealing with hundreds of thousands of daily interactions, deploying a structurally flawed system results in massive budget waste, plummeting customer satisfaction, and damaged brand reputation. It is time to move beyond the limitations of simple translation engines and explore the architectural depths of enterprise-grade voice operating systems.

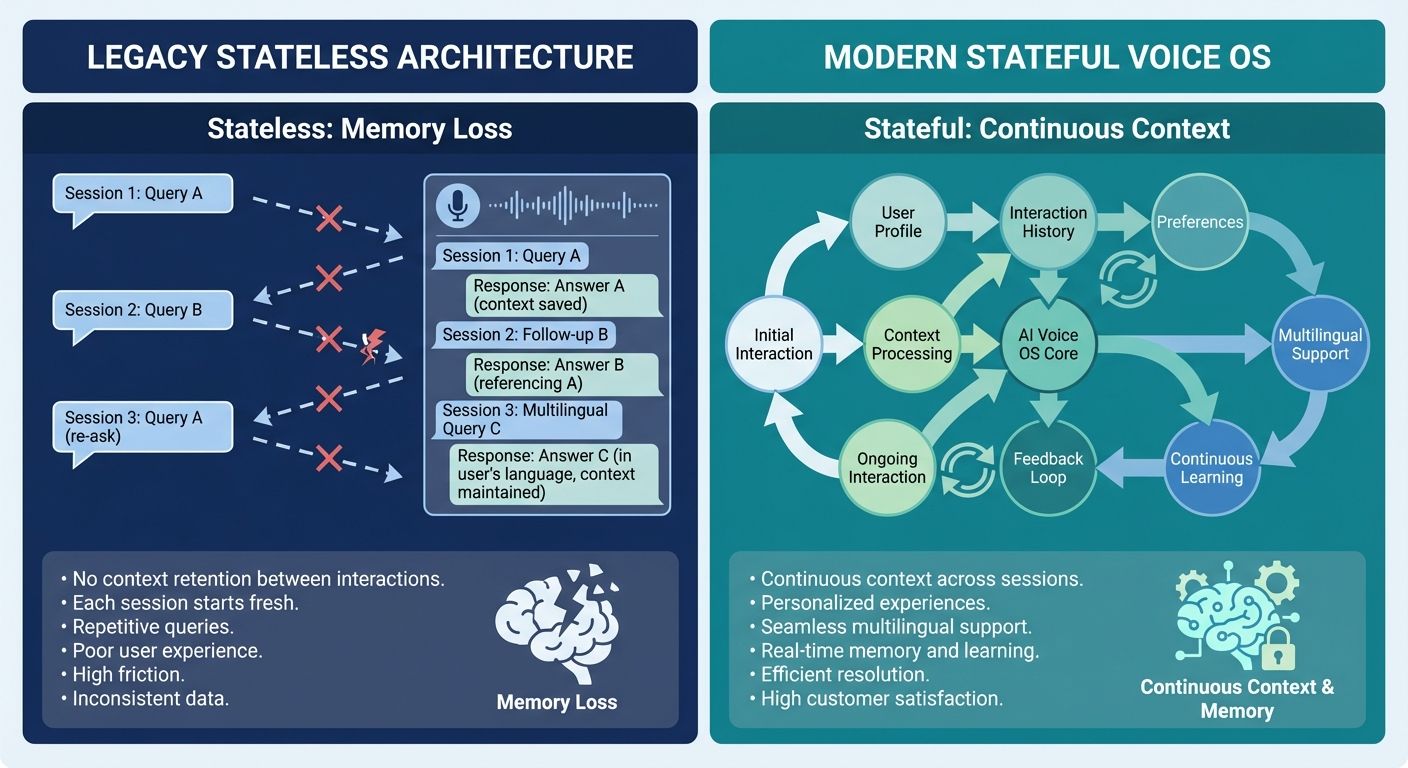

The Structural Flaws of Legacy Voice AI and Stateless Architecture

To understand the necessity of an advanced multilingual AI voice OS for high volume customer interactions, technical evaluators and business leaders must first analyze the failures of existing systems. A staggering number of B2B decision-makers, marketing managers, and CFOs—particularly in fast-paced sectors like EdTech and Finance—express deep frustration over the poor return on investment (ROI) from their voice AI deployments. The root of this widespread failure is inherently architectural: stateless architecture.

The Epidemic of Low Connection Rates

Current industry data reveals a prevalent issue in outbound voice AI campaigns: dismal connection rates hovering between 8% and 15%. This is not merely a symptom of bad lead quality; it is a direct consequence of deploying stateless AI agents. A stateless agent treats every single interaction as a completely new event. It lacks the ability to retain critical conversational context, such as prior call outcomes, previously discussed pain points, or the customer's preferred language from a past interaction. When an AI repeatedly calls a prospect without acknowledging previous drop-off points or prior commitments, the interaction feels incredibly robotic, leading to immediate hang-ups and devastatingly low conversion rates.

The Anatomy of a ₹3 Lakh Campaign Failure

Budget waste in outbound voice AI is a critical pain point that generic platforms fail to address. Consider a recent forensic analysis of a ₹3 lakh campaign failure. An enterprise deployed a standard, highly-marketed voice agent platform to reach a diverse, multilingual customer base. The campaign failed spectacularly due to critical pitfalls in lead segmentation, flawed language localization, poor script optimization, and a complete lack of retargeting strategies. The system simply translated English scripts into local dialects using basic APIs without understanding cultural nuance or regional interaction norms. Furthermore, because the system lacked conversational memory, retargeting efforts consisted of the AI repeating the exact same introductory script to users who had already engaged with the brand previously. This lack of state management burned through the campaign budget with near-zero ROI.

Why Voice AI Agents Sound Robotic: The Six Core Reasons

Businesses are desperately seeking ways to improve the human-like quality of their automated systems. The prevalent assumption is that AI sounds robotic simply because of the voice synthesis technology. However, an in-depth analysis proves that the issue primarily stems from a lack of conversational context and personalization. Here are the six key reasons why legacy voice AI agents sound robotic:

- Total Statelessness: The inability to remember what was said five seconds ago, let alone in a previous call.

- Basic Translation over Localization: Word-for-word translation engines that fail to capture the idiomatic expressions and cultural tone necessary for true multilingual communication.

- Disconnected Data Silos: Failure to seamlessly integrate with real-time CRM data, meaning the AI cannot reference a customer's specific account history or purchase data during the conversation.

- Static Scripting Constraints: Relying on rigid decision trees rather than dynamic, generative responses based on current conversational context.

- Latency and Turn-Taking Failures: Poor processing speeds that lead to unnatural pauses, interrupting the user, or failing to recognize conversational filler words.

- Lack of Sentiment Adaptability: The inability to detect a customer's frustration and dynamically alter the tone, pacing, or conversational path in real-time.

The Game Changer: Context Engineering vs. Prompt Engineering

When evaluating a multilingual AI voice OS for high volume customer interactions, developers and technical evaluators must look past superficial features and examine the core conversational engine. Most competitors boast about their 'prompt engineering' capabilities. However, prompt engineering is vastly insufficient for high-volume, highly complex enterprise environments.

The Limitations of Prompt Engineering

Prompt engineering involves tweaking the initial instructions given to a Large Language Model (LLM) to guide its behavior. While useful for simple, single-turn tasks, it completely breaks down in multi-turn, multilingual voice interactions. You cannot simply 'prompt' an AI to magically remember complex, dynamically shifting CRM states across a 15-minute conversation spanning multiple languages. Prompt engineering attempts to force static rules onto a dynamic environment, leading to the rigid, robotic interactions that plague traditional call centers.

The Superiority of Context Engineering

The solution lies in Context Engineering. Unlike prompt engineering, which focuses on the static instructions, context engineering focuses on the dynamic environment. It is the systematic, architectural approach to managing conversational state, external knowledge integration (such as Retrieval-Augmented Generation, or RAG), and real-time reasoning. A robust multilingual AI voice OS utilizes context engineering to ensure that every utterance by the AI is informed by the user's complete historical profile, their real-time sentiment, and the specific transactional goals of the call.

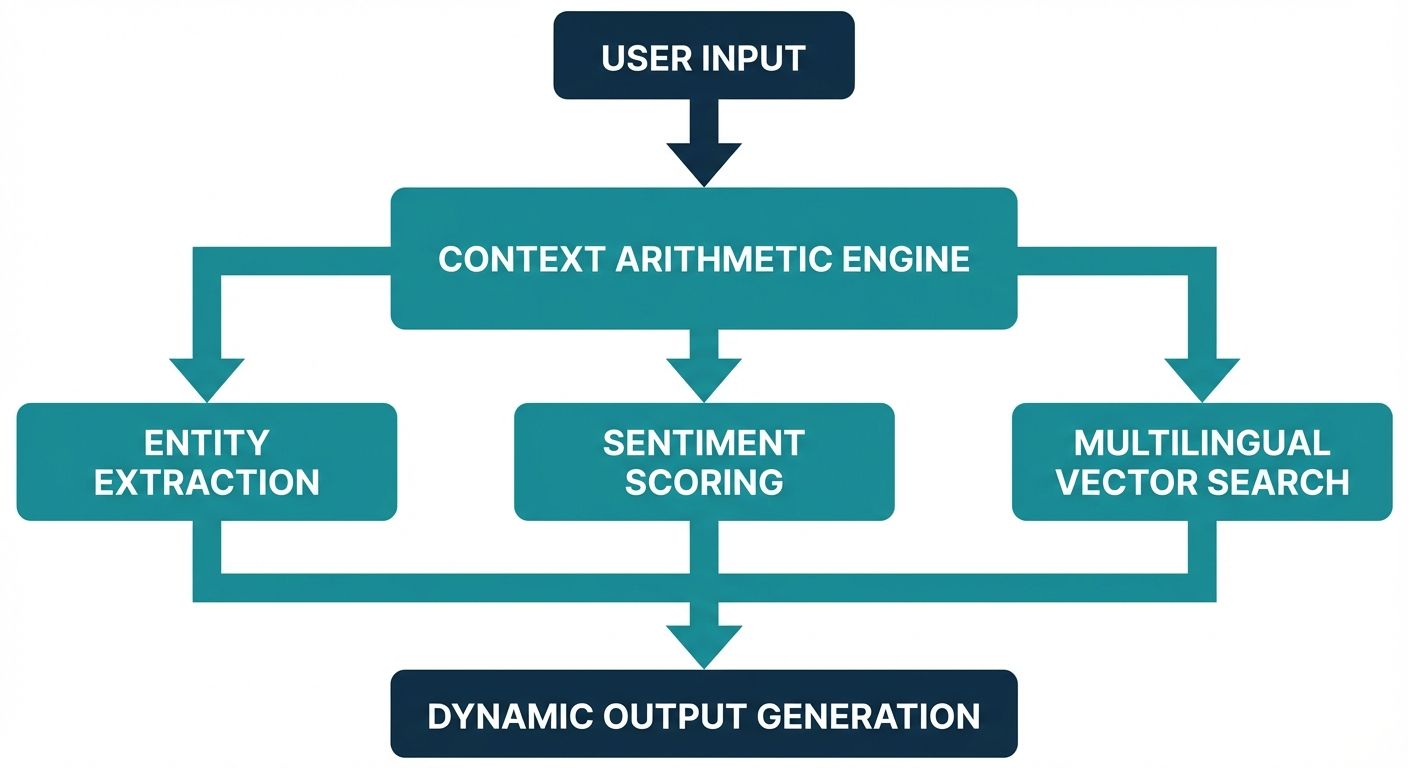

Inside the Kathan Engine: A Technical Primer on Context Arithmetic

At the forefront of context engineering is Alchemyst's proprietary Kathan engine. Serving as a true Enterprise Voice OS, the Kathan engine is purposefully built to handle the complexities of high-volume customer interactions across the entire customer lifecycle. The engine operates on a sophisticated mathematical framework known as Context Arithmetic.

Context arithmetic is the systematic process of determining precisely which pieces of information are relevant to the AI agent at any given millisecond of the conversation. Instead of dumping a massive, unorganized payload of CRM data into the LLM's context window—which causes latency and hallucinations—context arithmetic dynamically calculates the weight and relevance of historical data, real-time inputs, and localized knowledge bases.

The Five-Stage Pipeline for Context Determination

The Kathan engine achieves its unparalleled, human-like reasoning through a rigorous five-stage pipeline for context determination. This architectural marvel is what separates a basic chatbot from a true multilingual AI voice OS for high volume customer interactions:

- Stage 1: Multi-Modal Ingestion and State Retrieval. The system instantly pulls the user's interaction history, preferred language profile, and active CRM state before the call even connects.

- Stage 2: Dynamic Intent Parsing. As the user speaks, the engine transcribes the audio, detects the language, and parses the underlying commercial or support intent, filtering out background noise and conversational filler.

- Stage 3: Context Arithmetic Weighting. The engine mathematically scores available data points. If a user asks about a refund, the engine weights recent purchase history and refund policy documents higher than introductory marketing materials.

- Stage 4: Cross-Lingual Knowledge Retrieval (RAG). The system retrieves the heavily weighted information from external databases using advanced vector search. Crucially, it retrieves this information conceptually, allowing it to seamlessly map English knowledge base articles into native Hindi, Spanish, or Mandarin responses dynamically.

- Stage 5: State-Aware Prompt Synthesis. Finally, the mathematically optimized context is synthesized into a highly efficient, real-time prompt for the LLM. This guarantees a response that is accurate, culturally localized, and contextually aware, all within milliseconds.

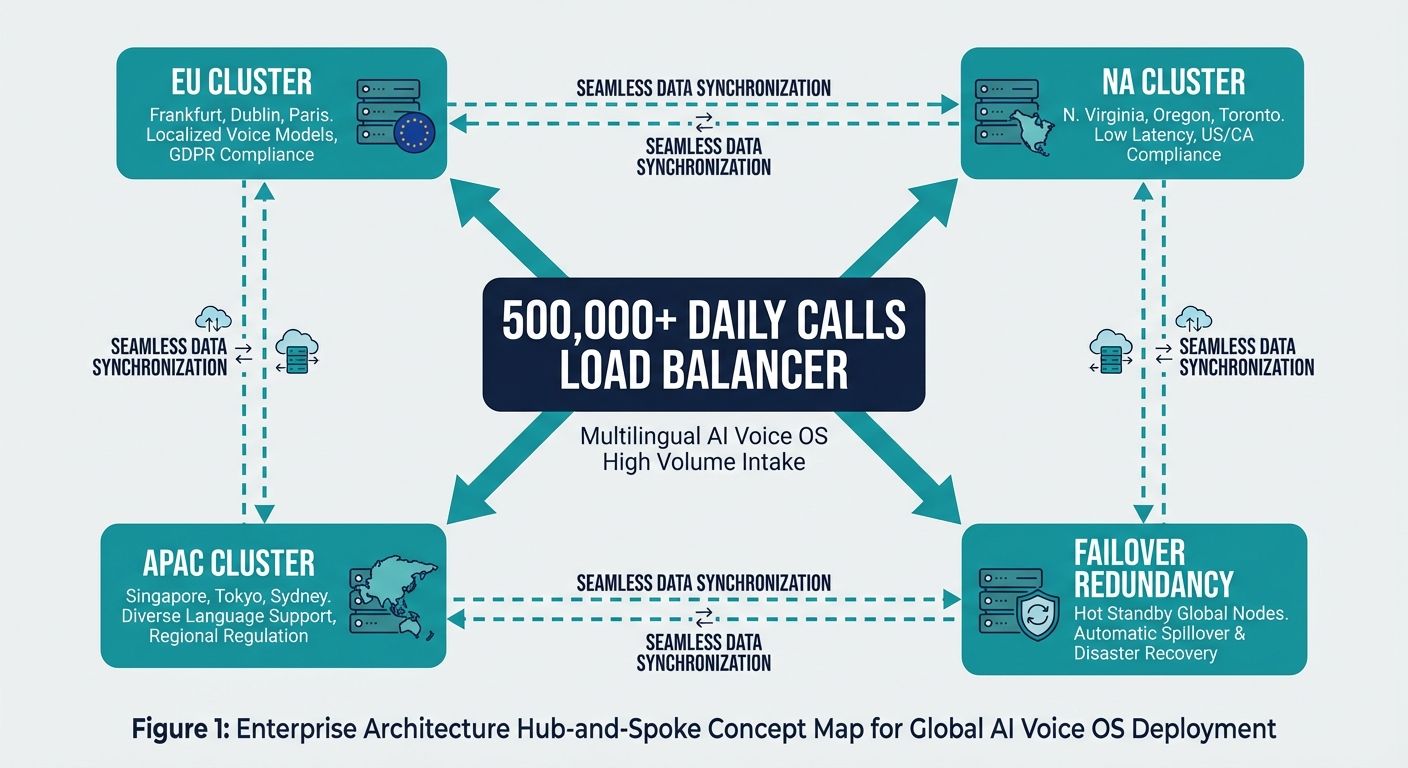

Scaling to 500,000+ Daily Calls: Enterprise Deployment Strategies

Deploying a multilingual AI voice OS for high volume customer interactions requires an infrastructure capable of handling massive concurrency without degradation in response quality. The Kathan Enterprise Voice OS is proven in real-world deployment data, routinely managing over 500,000 daily calls. Achieving this level of scale requires moving beyond basic API wrappers and implementing deep, structural deployment strategies.

Advanced Lead Segmentation and Multilingual Routing

High-volume campaigns fail when a one-size-fits-all approach is applied to a diverse audience. A context-aware OS automatically segments leads based on geographic data, historical language preferences, and previous interaction outcomes. By the time the AI initiates the connection, it has already configured its localization engine to speak in the precise dialect and cultural tone best suited for that specific prospect.

Intelligent Retargeting and Lifecycle Management

Because the Kathan engine employs persistent state management, retargeting becomes highly intelligent. If a prospect drops off a call because they were driving, the AI logs this specific state. When the retargeting call occurs the next day, the AI does not start over. Instead, it begins with, 'Hi, I know you were driving yesterday when we spoke, so I'll keep this brief...' This level of contextual continuity drastically boosts the baseline 8-15% connection rates, transforming cold outbound metrics into warm, highly engaged conversations.

Accelerating Qualitative Insights and Sentiment Analysis

Beyond simply handling inbound queries or outbound sales, a true multilingual AI voice OS acts as an enterprise's most powerful data collection tool. Traditional Net Promoter Score (NPS) surveys rely on low-converting email forms. Voice AI facilitates richer qualitative feedback capture by engaging customers in conversational surveys.

As the AI conducts these high-volume interactions across multiple languages, it performs immediate sentiment analysis. It detects hesitation, frustration, or delight in the customer's voice, mapping these emotional data points directly back into the CRM. This provides business leaders with a real-time, aggregated dashboard of customer sentiment across global markets, bridging the gap between raw data collection and actionable qualitative insights.

Alchemyst Kathan: The Definitive Commercial Solution

The market is saturated with basic, developer-centric API wrappers that promise multilingual support but deliver disjointed, stateless, and robotic experiences. Businesses and enterprises seeking to genuinely improve their customer service and experience—particularly those who have been burned by disappointing ROI from previous AI deployments—must adopt a structural solution.

Alchemyst's Kathan engine stands alone as the definitive multilingual AI voice OS for high volume customer interactions. By replacing the flaws of stateless architecture with advanced context engineering, integrating real-world CRM data through its five-stage pipeline, and proving its stability across 500,000+ daily calls, Kathan eradicates budget waste and robotic interactions. It empowers global enterprises to connect with their audiences authentically, intelligently, and flawlessly at scale.